Human factors

Identification and management of human factors is critical for the effective and reliable minimisation of risk. By understanding those human factors which influence employees, organisations are able to implement targeted solutions to improve human reliability, reduce error and mitigate its consequences.

Strategies designed to identify and optimise human factors can contribute to the reduction of risk to a level that is as low as reasonably practicable (ALARP). Such approaches will assist responsible parties in meeting many of their obligations under the OPGGS Act and associated Regulations.

‘Human Error’ has long been identified as a contributing factor to incident causation. Commonly cited statistics claim that human error is responsible for anywhere between 70-100% of incidents. It seems logical to blame such incidents on a person or small groups of people and to focus remedial actions at the individual level (e.g. training, disciplinary action, etc.). However, this approach to addressing human error ignores the latent conditions in work systems that trigger human error across the workforce.

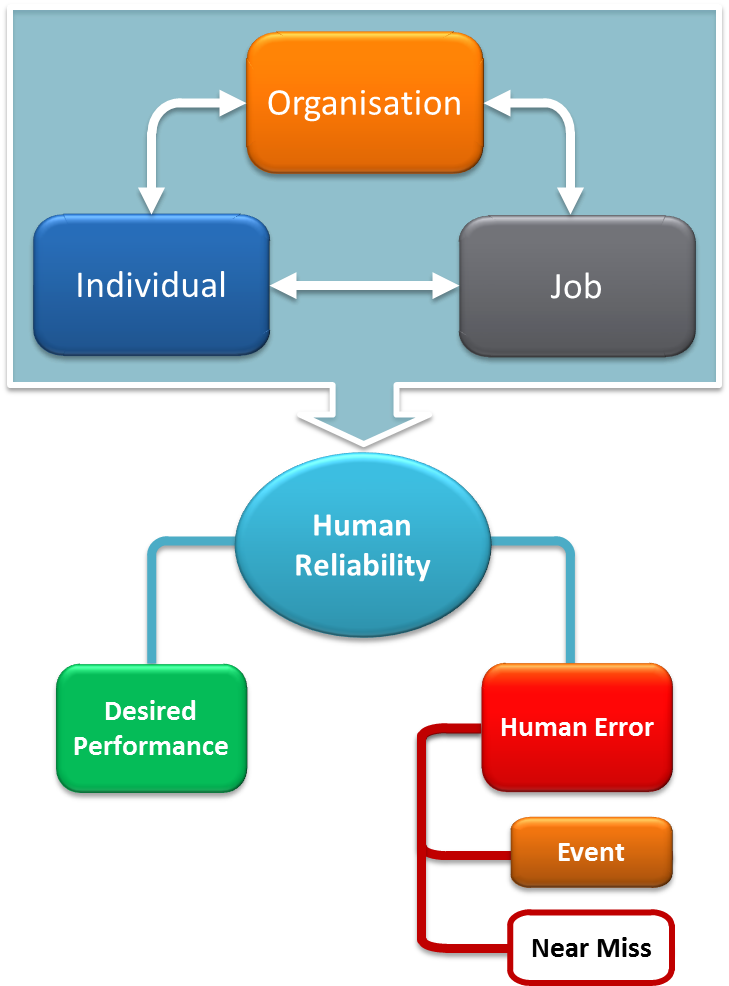

Human error should be understood as an outcome of poor human reliability, that is, the likelihood that an individual will perform their task effectively. Organisational, job, and individual factors all interact to influence human reliability. These performance shaping factors (PSFs) are relevant to performance across safety, integrity and environmental management.

NOPSEMA defines human factors as 'the ways in which the organisation, the job, and the individual interact to influence human reliability in hazardous event causation.' This interaction is outlined in the diagram below.

A model of human factors

Human Error is commonly defined as a failure of a planned action to achieve a desired outcome. Performance shaping factors (PSFs) exist at individual, job, and organisational levels, and when poorly managed can increase the likelihood of an error occurring in the workplace. When errors occur in hazardous environments, there is a greater potential for things to go wrong. By understanding human error, responsible parties can plan for likely error scenarios, and implement barriers to prevent or mitigate the occurrence of potential errors.

Errors result from a variety of influences, but the underlying mental processes that lead to error are consistent, allowing for the development of a human error typology. An understanding of the different error types is critical for the development of effective error prevention and mitigation tools and strategies. A variety of these tools and strategies must be implemented to target the full range of error types if they are to be effective.

Errors can occur in both the planning and execution stages of a task. Plans can be adequate or inadequate, and actions (behaviour) can be intentional or unintentional. If a plan is adequate, and the intentional action follows that plan, then the desired outcome will be achieved. If a plan is adequate, but an unintentional action does not follow the plan, then the desired outcome will not be achieved. Similarly, if a plan is inadequate, and an intentional action follows the plan, the desired outcome will again not be achieved. These error points are demonstrated in the figure below and explained in the example that follows.

Human error typology

Failures of action, or unintentional actions, are classified as skill-based errors. This error type is categorised into slips of action and lapses of memory. Failures in planning are referred to as mistakes, which are categorised as rule-based mistakes and knowledge-based mistakes.

Skill-based errors

Skill-based errors tend to occur during highly routine activities, when attention is diverted from a task, either by thoughts or external factors. Generally when these errors occur, the individual has the right knowledge, skills, and experience to do the task properly. The task has probably been performed correctly many times before. Even the most skilled and experienced people are susceptible to this type of error. As tasks become more routine and less novel, they can be performed with less conscious attention – the more familiar a task, the easier it is for the mind to wander. This means that highly experienced people may be more likely to encounter this type of error than those with less experience. This also means that re-training and disciplinary action are not appropriate responses to this type of error.

A memory lapse occurs after the formation of the plan and before execution, while the plan is stored in the brain. This type of error refers to instances of forgetting to do something, losing place in a sequence, or even forgetting the overall plan.

A slip of action is an unintentional action. This type of error occurs at the point of task execution, and includes actions performed on autopilot, skipping or reordering a step in a procedure, performing the right action on the wrong object, or performing the wrong action on the right object. Typical examples include:

-

missing a step in an isolation sequence

-

pressing the wrong button or pulling the wrong lever

-

loosening a valve when intending to tighten it

-

transposing digits when copying numbers (e.g. writing 0.31 instead of 0.13)

Slips and lapses can be minimised and mitigated through workplace design, effective fatigue management, use of checklists, independent checking of completed work, discouraging interruptions, reducing external distractions, and active supervision.

Mistakes

Mistakes are failures of planning, where a plan is expected to achieve the desired outcome, however due to inexperience or poor information the plan is not appropriate. People with less knowledge and experience may be more likely to experience mistakes. Mistakes are not committed ‘on purpose’; as such, disciplinary action is an inappropriate response to these types of error.

Mistakes can be minimised and mitigated through robust competency assurance processes, good quality training, proactive supervision, and a team climate in which co-workers are comfortable observing and challenging each other.

Mistakes can be rule-based or knowledge-based. The different types of mistakes are explained below through the use of an example from NOPSA Safety Alert 28, where a construction vessel failed to avoid a cyclone. This example demonstrates how multiple errors at various levels of an organisation can interact to lead to a hazardous event.

Knowledge-based mistakes result from ‘trial and error’. In these cases, insufficient knowledge about how to perform a task results in the development of a solution that is incorrectly expected to work.

Rule-based mistakes refer to situations where the use or disregard of a particular rule or set of rules results in an undesired outcome.

Some rules that are appropriate for use in one situation will be inappropriate in another. Incorrect application of a good rule occurs when a rule has worked well on previous occasions, so it is applied to a similar situation with the incorrect expectation that it will work.

Violations

Failure to apply a good rule is also known as a violation. Violations are classified as human error when the intentional action does not achieve the desired outcome, or results in unanticipated adverse consequences. Violations tend to be well-intentioned, targeting desired outcomes such as task completion and simplification. Where violations involve acts of sabotage designed to cause damage, the planned action (violation) has achieved the desired outcome (damage). This type of behaviour does not constitute human error and, following investigation, should be managed through the application of appropriate disciplinary measures. There are three main types of violations pertaining to human error: routine, situational, and exceptional.

A routine violation is one which is commonplace and committed by most members of the workplace. For example, in a particular office building it is against the rules for personnel to use the fire escape stairwell to move between floors, but it is common practice for people to do so anyway.

A situational violation occurs, as its name suggests, in response to situational factors, including excessive time pressure, workplace design, and inadequate or inappropriate equipment. When confronted with an unexpected or inappropriate situation, personnel may believe that the normal rule is no longer safe, or that it will not achieve the desired outcome, and so they decide to violate that rule. Situational violations generally occur as a once-off, unless the situation triggering the violation is not corrected, in which case the violation may become routine over time.

An exceptional violation is a fairly rare occurrence and happens in abnormal and emergency situations. This type of violation transpires when something is going wrong and personnel believe that the rules no longer apply, or that applying a rule will not correct the problem. Personnel choose to violate the rule believing that they will achieve the desired outcome.

Preventing violations requires an understanding of how motivation drives behaviour. Planned behaviour (intentional action) is driven by an individual’s attitude towards that behaviour. Further, individual decision-making is primarily influenced by the consequences the individual expects to receive as a result of their behaviour, which can influence their attitude towards that behaviour.

In most organisations, consequences associated with risk management behaviours compete against those associated with productivity behaviours. While ‘Safe Production’ is a popular phrase, risk management activities necessarily increase the amount of time required to complete a task. Productivity outcomes are generally more predictable and definitive than those associated with risk management (i.e. definitely achieving a target versus potentially avoiding an incident). So the perceived value of productivity behaviour may be greater than that of risk management behaviour.

Violations can be minimised and prevented through education about risks and consequences, training in 'why' not just 'how', the use of decentralised decision-making structures, dedicated site-based roles of procedure modification approval, allowing sufficient time for risk management activities, the use of lead indicators as targets, and active workforce involvement in the development of rules and procedures that will affect them.

Note: Violations are classified as human error only when they fail to achieve the desired outcome. Where a violation does achieve the desired outcome, and does not cause any other undesired outcomes, this is not human error. These types of violations may include violation of a bad rule, such as a procedure that, if followed correctly, would trip the plant. In such cases, a review of the rules and procedures is advisable.

The concept of Human Reliability Analysis (HRA) reflects an understanding that people and systems are not error-proof, and that improved reliability requires an understanding of error problems, leading to improved mitigation strategies. Essentially, HRA aims to quantify the likelihood of human error for a given task. HRA can assist in identifying vulnerabilities within a task, and may provide guidance on how to improve reliability for that task.

A number of HRA techniques have been developed for use in a variety of industries, many of which are freely available. A review of HRA methods has been published by the U.K. Health and Safety Executive. Generally, HRA tools calculate the probability of error for a particular type of task, while taking into account the influence of performance shaping factors. Quantitative techniques refer to databases of human tasks and associated error rates to calculate an average error probability for a particular task. Qualitative techniques guide a group of experts through a structured discussion to develop an estimate of failure probability, given specific information and assumptions about tasks and conditions.

The basic process

A hierarchical task analysis is conducted on critical activities (i.e. activities with the potential to cause a hazardous event), and starts with the identification of individual tasks and steps within an activity. Potential errors associated with specific steps are then highlighted; often through the use of keyword prompts identifying possible error mechanisms (e.g. step skipped, right action on wrong object, wrong action on right object, transposed digits, etc.). Once the possible error mechanisms have been identified, associated error probabilities can be estimated. A typical quantitative approach firstly identifies the nominal error rate for the task type. Task types vary between tools and may be very specific or quite general. Then the influence of relevant performance shaping factors is calculated for the task. Performance shaping factors may increase or decrease the likelihood of error for the task in question. The overall error probability figure reflects the average error rate for the task type, while accounting for the influence of relevant situational factors. Once potential sources of error have been identified, actions can be developed to minimise or mitigate their impact and improve the reliability of human performance within the task.

Limitations of HRA data

As with any approach to risk quantification, the data generated during a HRA should be interpreted with an understanding of the limitations of the particular methodology in use. In general, the following points may assist in determining the applicability of HRA data:

-

The quality of the analysts can influence the validity of the HRA findings. Consider how long they have worked as an analyst, their experience with the method in question, their experience with the industry and their knowledge of the organisation and particular workplace/s

-

The assumptions made by the analysts will have a significant bearing on their findings. Assumptions should be articulated within the final report and taken into consideration when reviewing the findings

-

The type of task subject to the analysis can influence the validity of findings. Low level tasks that are highly routine and carried out in well controlled environments tend to be better understood, and therefore probabilities can be more accurate. However, probabilities may be less accurate for tasks that are carried out in more complex environments or for tasks which require interpretation of multiple and contradictory sources of data

-

The data sources used to calculate probabilities may not be relevant when analysing tasks associated with the use of very new technology. It is possible that the types and frequencies of errors associated with the use of new technology may vary significantly from those contained within the databases referenced during the analysis

-

The impact of organisational context is generally not well represented in most quantitative HRA methods. An additional qualitative approach may be required to improve accuracy of probabilities and predictions where organisational context is likely to have a significant impact on error

-

The relevance of performance shaping factors assessed within the analysis should be considered, along with the potential impact of other factors that are not part of the analysis methodology used. Additionally, the interaction between performance shaping factors does not appear to be represented in most methods, so consideration should be given as to how such interactions can be incorporated into the overall analysis

-

Consider a combination of quantitative and qualitative methods as a way of facilitating more robust and reliable analysis.

In addition to the strengths and limitations of various analysis methods, consideration should also be given to the ways in which the analysis findings will be applied. Calculation of error probabilities alone is likely to be of limited use, particularly when considering the factors impacting the validity of such calculations. In particular, caution should be exercised if using error probabilities as cut-off points (e.g. our error probability must be below x to perform this task).

A proactive approach

Useful information is generated at all points throughout the HRA process, which can provide valuable guidance to organisations if applied appropriately. HRA information can be used in a number of proactive approaches, including:

-

during front end engineering and detailed design to identify design options that are likely to reduce opportunities for error

-

during system modifications and other relevant changes to determine whether the changes are likely to impact error in either direction

-

to assist in the identification of commonly experienced performance shaping factors, enabling the organisation to address these at a tertiary level

-

during incident investigations to identify contributing latent conditions

-

at a workplace level, identifying and modifying those performance shaping factors which contribute to error.

Human performance is inherently unreliable –people will always experience error. The best cases of human reliability observed in the workforce report error rates of around one in every 100 steps for routine procedure-based tasks, and one in every ten steps for more complex non-routine work, such as critical alarm diagnosis and response. As task complexity increases, so does the associated error rate.

While it is inevitable that a workforce will experience errors, certain factors will influence the rate of error, either positively or negatively. These performance shaping factors fall into three basic categories:

Individual factors include personality, competence and skill, mood, attitude, mental ability, and individual health factors, such as fatigue, drugs and alcohol, physical capability, and psychological health

Job factors include the physical working environment, human-machine interface, workload, availability and quality of procedures, the equipment being used, task requirements, and team member behaviour

Organisational factors include organisational priorities, decision-making and strategy, the culture of the company or team, the availability of resources, communication systems, change management, leadership behaviour, and relevant Key Performance Indicators.

The interaction of factors within and between categories can be complex and difficult to manage. Management of human factors should not be delegated to individual supervisors or line managers, or to safety personnel. An integrated organisational approach is needed to ensure that high-level decisions do not create error-inducing factors, as front-line approaches cannot fully mitigate the impact of such decisions.

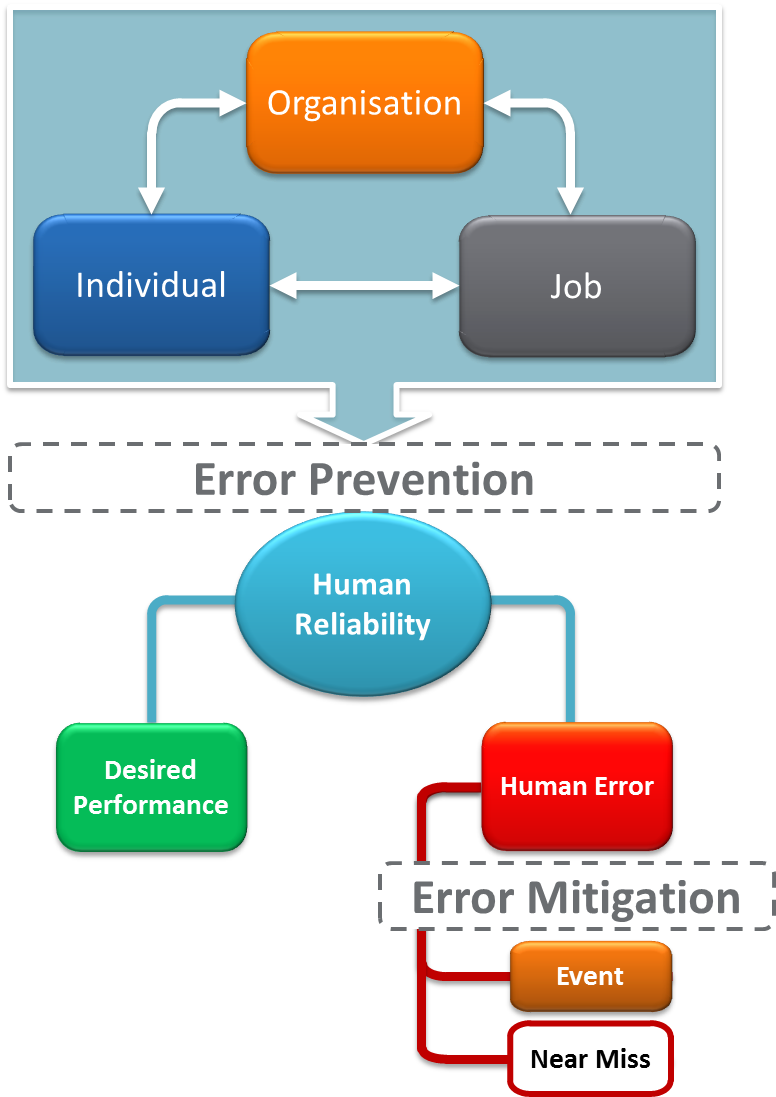

To reduce error risk to a level that is ALARP, risk management strategies should seek both to reduce error likelihood and prevent error escalation. Error prevention strategies should focus on improving human reliability via performance shaping factors, while error mitigation strategies should provide opportunities for error identification and recovery, as outlined in the diagram below.

Error prevention strategies include:

-

Human factors in engineering and design

-

Competency assurance

-

Task-based training

-

Procedural controls

-

Hazard identification and risk assessment activities

Error mitigation strategies include:

-

Alerts and alarms

-

Error management training

-

Secondary checking

-

Engineering controls such as automatic trips

Strategies that can facilitate both prevention and mitigation of error include:

-

Active supervision

-

Job observation and feedback

-

Drills and simulations

-

Fatigue risk management

-

Proactive planning and resource allocation

-

Monitoring and control of substances such as medications, alcohol and illicit drugs

Human factors and error risk reduction

Related Documents

| Title | Type | Size | Date |

|---|---|---|---|

| Human Factors information paper - Critical task analysis | 657.04 KB | 27/01/2026 | |

| Critical task analysis information paper | 510.27 KB | 10/12/2025 | |

| Human error risk reduction to ALARP information paper | 491.73 KB | 09/12/2025 | |

| Human factors in accident investigations information paper | 382.59 KB | 09/12/2025 | |

| Safety culture information paper | 418.47 KB | 09/12/2025 | |

| Complex decision making information paper | 502.3 KB | 25/05/2020 | |

| Personnel resourcing information paper | 549.52 KB | 21/05/2020 | |

| Procedures and instructions information paper | 611.02 KB | 21/05/2020 | |

| Perception surveys information paper | 568.97 KB | 21/05/2020 | |

| Engineering and design information paper | 712.33 KB | 21/05/2020 | |

| Competency assurance information paper | 916.13 KB | 21/05/2020 | |

| Change management information paper | 494.21 KB | 21/05/2020 |